Dear Drs. xxxxxxxxxxxx and xxxxxxxxxxxxxxx,

I write to inquire whether the Journal of Clinical Epidemiology would consider a Perspective manuscript entitled xxxxxxxxxxxxxxxxxxxxxxxx.

The manuscript (~5,100 words, 37 references) addresses a problem that experienced clinical epidemiologists and HTA reviewers recognize but that the published literature has been reluctant to name directly: when Phase 3 trials produce overall survival benefits measured in weeks to a few months — statistically significant, clinically marginal — the center of gravity in value assessment shifts to forward-looking economic models that extrapolate survival curves decades beyond the observed trial window. At that horizon, the distinction between a conservative, empirically grounded projection and a flagrantly optimistic one is not self-evident from the model output. Both can be produced using individually defensible methods. Both will pass peer review. Only one approach will be systematically favored by the pharmaceutical sponsors preparing regulatory submissions.

This manuscript identifies where that asymmetry lives. It reverse-engineers five core analytical domains — parametric survival extrapolation, partitioned survival model construction, Markov state transition engineering, utility and QALY construction, and healthcare resource utilization modeling — and in each case distinguishes the conservative, realistic application from the optimistic, advocacy-oriented one. The analysis is not a theoretical taxonomy. It uses worked numerical examples and recent Phase 3 trial data across four tumor types to show concretely how selection among statistically equivalent model specifications produces lifetime survival estimates that diverge by years, and how those divergences compound across domains into cost-effectiveness conclusions that bear little resemblance to what the trial actually demonstrated.

The argument is structural, not accusatory. This is not an allegation of fraud or bad faith, and the manuscript does not contest the clinical efficacy of any compound discussed. The claim is that the dossier production process contains well-defined leverage points that systematically favor optimistic extrapolation, that these leverage points are individually defensible and collectively distorting, and that HTA bodies and payers currently lack the auditing vocabulary to identify them reliably. The manuscript closes with four low-cost, implementable transparency reforms — pre-specified survival model selection criteria, independent extrapolation, OS maturity thresholds before PFS-based models are accepted for formulary decisions, and mandatory disclosure of all fitted parametric specifications — that address the problem without imputing misconduct.

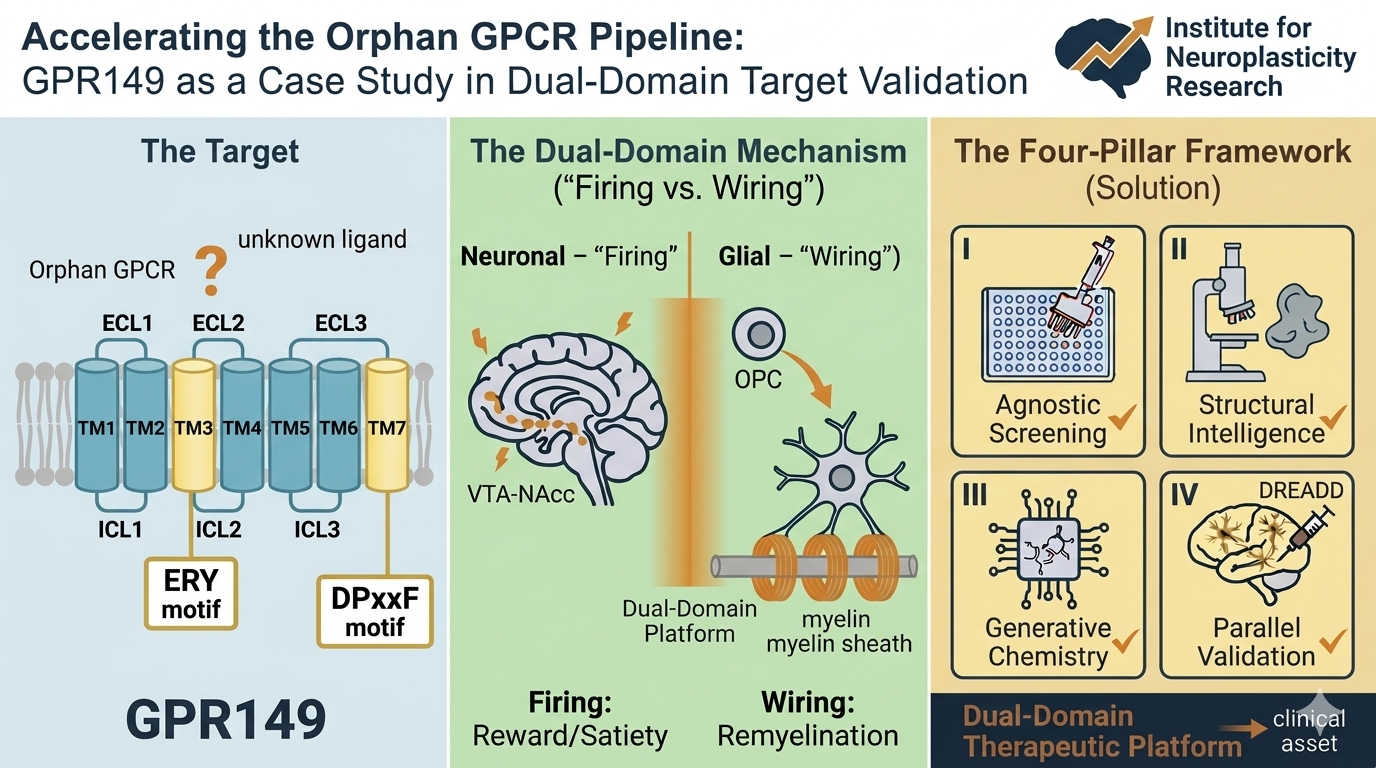

The author is an independent scholar and Director of Health Outcomes Research and Causal Evidence at the Institute for Neuroplasticity Research in Oak Ridge, Tennessee, with direct prior experience in pharmaceutical HEOR and survival analysis across hematologic oncology and solid tumor treatment compounds at major biopharma firms and smaller start-ups. He has no current financial relationship with any pharmaceutical firm or ongoing oncology trial. That independence is material: the manuscript carries no industry sponsorship or trial affiliation, and its argument does not need to accommodate any sponsor’s dossier interests. The Journal of Clinical Epidemiology is one of the few venues whose editorial tradition — including its sustained engagement with surrogate endpoint validity, research bias, and the translation of trial evidence into policy — makes it the right home for this article.

I would be pleased to submit the full manuscript for your consideration as a Perspective.

Thank you for your time.

Michael A. S. Guth

Michael A. S. Guth, Ph.D., J.D.

Director, Health Outcomes Research & Causal Evidence

Institute for Neuroplasticity Research

[email protected] | (865) 483-8309 www.linkedin.com/in/populationhealthmanagement

“Pioneering spirit should continue, not to conquer the planet or space, but rather to improve the quality of life.” — Bertrand Piccard

Clinical Evidence Generation & Publications Track Record:

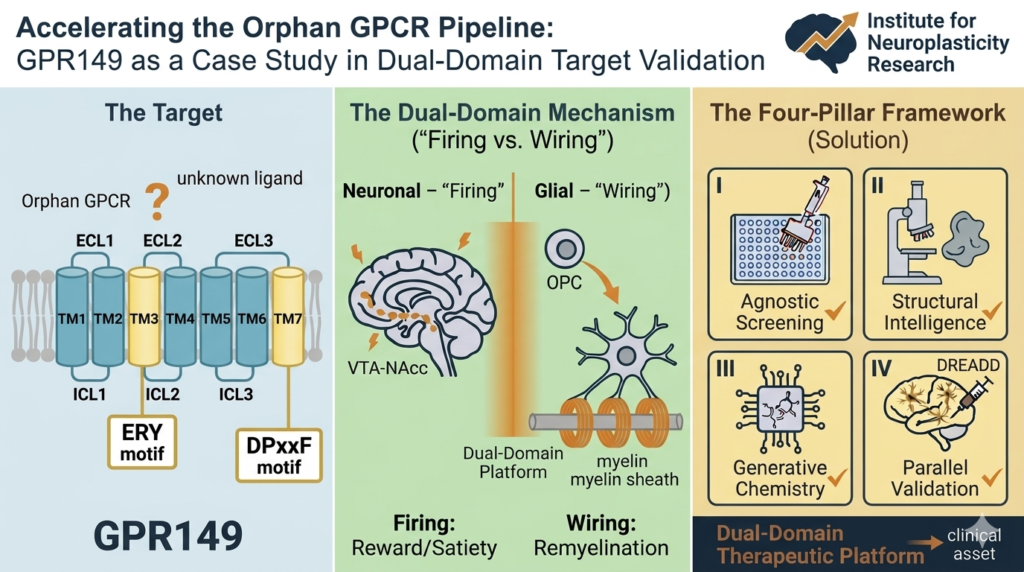

- https://authors.elsevier.com/a/1n0uP4r9Rkz1l6 Target validation case study: GPR149 – Drug Discovery Today (April 2026) [Elsevier-provided link for free access/download through June 19, 2026]

– 𝐕𝐨𝐥𝐭𝐚𝐢𝐫𝐞 So here’s my question: Should a national policy treat perfectly healthy, “at risk” people for Alzheimer’s before they show a single symptom?

I’ve spent the last several months researching and writing exactly that — a new national prophylaxis framework for Alzheimer’s prevention. Not early detection. Prevention before onset. [Spoiler alert: in June I will send the 1500-word policy proposal to Health Affairs]

The uncomfortable reality (why this is not academic)

Most healthcare policy waits for a diagnosis. My research flips the model: identify genetic, biomarker, or lifestyle-based risk in healthy individuals, then intervene with drugs, protocols, or monitoring.

The upside is obvious — delaying or stopping Alzheimer’s entirely. The downside is less discussed: labeling healthy people as “pre-patients,” potential over-medicalization, and a massive shift in who pays for what.

Whether you love or hate the idea, it’s coming. And that changes your industry.

The career hook (why you should care even if you hate policy)

Here’s where your job search enters the room.

A national Alzheimer’s prophylaxis policy would create entirely new roles:

• Genetic risk counselors for employers

• “Pre-diagnosis” care coordinators in insurance

• Compliance and ethics officers for at-risk data privacy

• New training specialties for geriatric nurses, data scientists, and benefits managers

If you work in health tech, HR, benefits brokerage, pharma sales, or public policy — this is a near-future skill set you can start building now. Ignoring it means competing against people who saw it coming.

What I’m actually doing with this research

I’m not just theorizing. My current writing outlines a state-level pilot framework that answers:

• Who consents for a healthy person?

• What happens if prophylaxis fails — or has side effects?

• How do employers handle “at risk” designations without discrimination?

I’ll be sharing key sections over the next few weeks. First up: the liability question that keeps corporate counsel up at night.

Voltaire was right: the right question is more revealing than any tidy answer.

My question to you — whether you’re in healthcare, tech, or just planning a 30-year career:

Are you waiting for Alzheimer’s prevention to become mainstream before you learn how it affects your job market? The framework is designed to be implementable at the primary care level — no specialist required. I practice what I preach — I’ve been following this framework myself for two years. The built-in design means that even if I was never at elevated risk, I’ve already realized measurable health and cost benefits. That’s the win-win.

Intrigued? The first installment drops next week.